Computer Graphics

Texture & Shadows

Last Time: Rasterization Details

Transformations & Projections

Transformations of Triangles

A triangle is an affine combination of three points \[ \vec{X}\of{\alpha, \beta, \gamma} \;=\; \alpha\vec{A} + \beta\vec{B} + \gamma\vec{C}\]

with \(\alpha+\beta+\gamma=1\).Affine transformation preserve affine combinations

- Triangles are transformed to triangles

Planar projections map triangles to triangles

- Similar derivation as for lines

Lighting

How to transform normal vectors?

A point \(\vec{x} = \transpose{(x,y,z,1)}\) lies on its tangent plane, specified by \(\vec{n} = \transpose{(n_x, n_y, n_z, d)}\): \[ n_x x + n_y y + n_z z + d = 0 \quad\Leftrightarrow\quad \transpose{\vec{n}} \vec{x} = 0 \]

The same equation should be satisfied after an affine transformation \(\mat{M}\) maps \(\vec{x}\) to \(\vec{x}'\) and \(\vec{n}\) to \(\vec{n}'\) \[ 0 \;=\; \transpose{\vec{n}'} \vec{x}' \;=\; \transpose{\vec{n}'} \mat{M} \vec{x} \;=\; \transpose{\left(\transpose{\mat{M}} \vec{n}' \right)} \vec{x} \]

Comparing the two equations yields \(\vec{n}' = \mat{M}^{\mathsf{-T}} \vec{n}\)

- In practice use upper-left \(3 \times 3\) block of \(\mat{M}\)

- Don’t forgot to re-normalize \(\vec{n}'\)

Rasterization

Bresenham Algorithm

Δx = x1-x0;

Δy = y1-y0;

d = 2*Δy - Δx;

ΔE = 2*Δy;

ΔNE = 2*(Δy - Δx);

set_pixel(x0, y0);

for (x = x0, y = y0; x < x1;)

{

if (d <= 0) { d += ΔE; ++x; }

else { d += ΔNE; ++x; ++y }

set_pixel(x, y);

}Good: Only integer arithmetic!

Visibility

Z-Buffer

- Store current min. z-value for each pixel

- After model transformation, view transformation, projection transformation, and viewport transformation

- Additional buffer for depth values

- Framebuffer stores RBG color values

- Depth buffer (z-buffer) stores depth values

- Storage: additional 16 to 32 bits per pixel

Z-Buffer

Image from Wikipedia

Today: Texture & Shadows

Materials & Texture

So far: color/material varies per model or per vertex

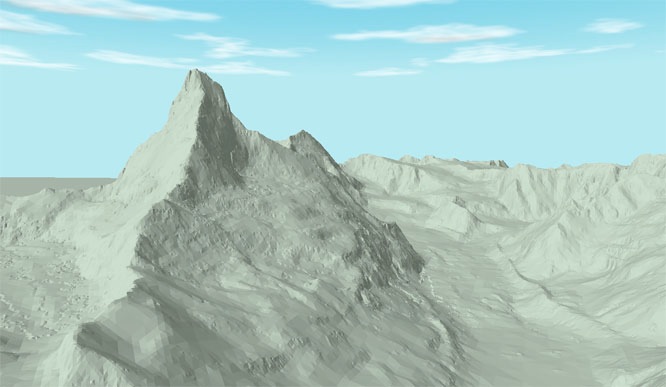

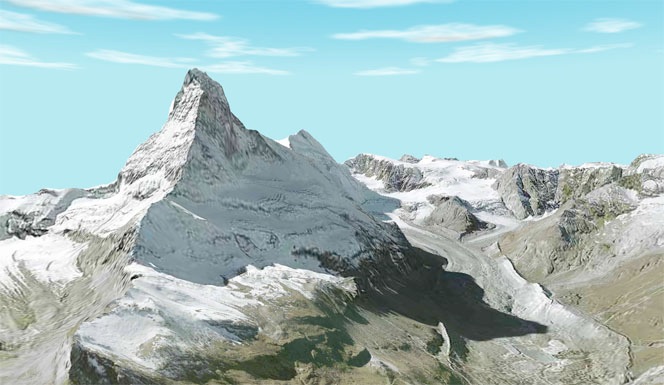

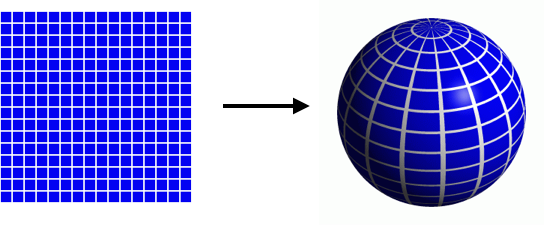

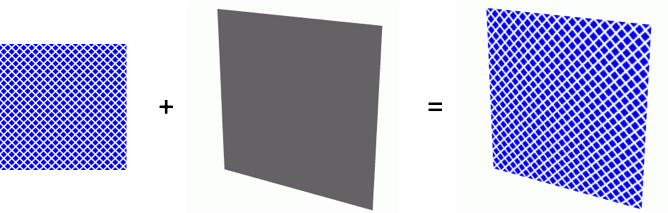

Textures add visual detail without raising geometric complexity: Paste 2D images onto 3D geometry

Geometry

Geometry  Texture

Texture  Textured Mesh

Textured Mesh

Images from http://www.endoxon.ch

Materials & Texture

So far: color/material varies per model or per vertex

Textures add visual detail without raising geometric complexity: Paste 2D images onto 3D geometry

Geometry

Geometry  +Lighting

+Lighting  +Texture

+Texture

Images from http://www.3drender.com/jbirn/productions.html

Materials & Texture

- Textures allow us to change many surface properties:

- reflectance (diffuse + specular colors/coefficients)

- normal vector (normal mapping, bump mapping)

- geometry (displacement mapping)

- opacity (alpha mapping)

- reflection/illumination (environment mapping)

- …

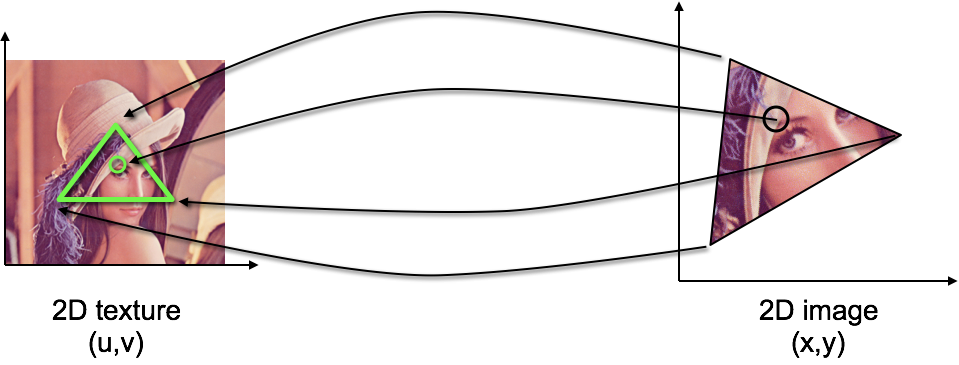

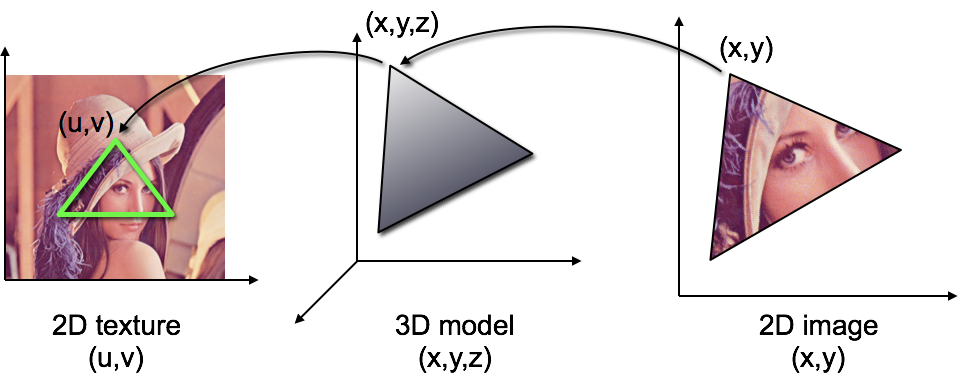

Texturing One Triangle

Texturing One Triangle

- Assign 2D texture coordinate \(\vec{u}=(u,v)\) to each vertex

- Interpolate texture coordinate by barycentric coordinates \[ \vec{u}\of{\vec{X}} = \alpha \vec{u}\of{\vec{A}} + \beta \vec{u}\of{\vec{B}} + \gamma \vec{u}\of{\vec{C}} \]

- Fetch color value from texture: \(\vec{c}\of{\vec{x}} = \mathrm{texture}\of{\vec{u}\of{\vec{x}}}\)

Texturing One Triangle

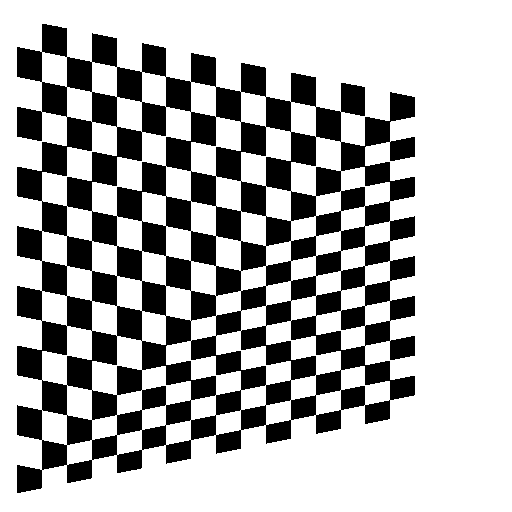

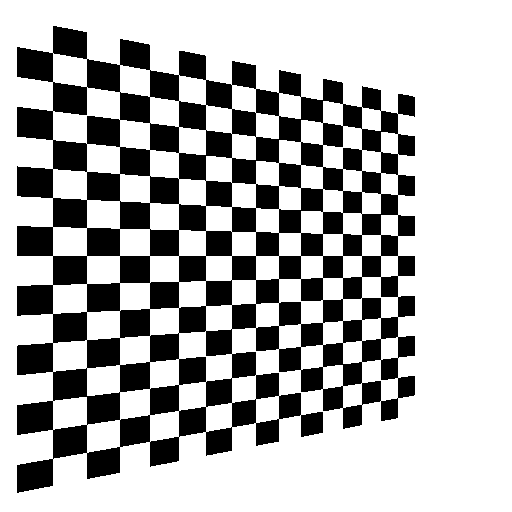

perspectively incorrect

Images from Akenine-Möller, “Real-Time Rendering”

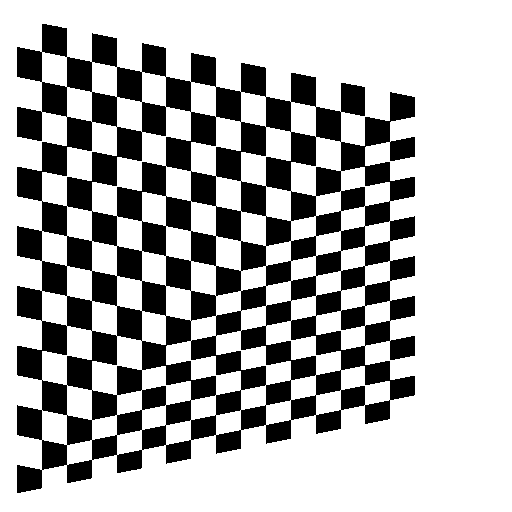

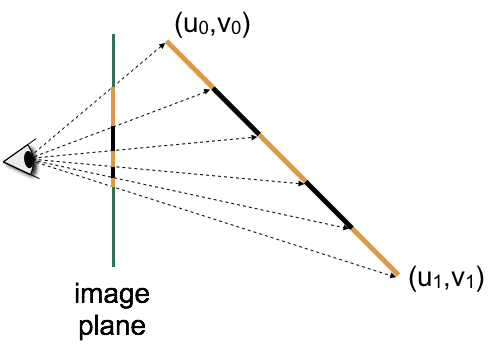

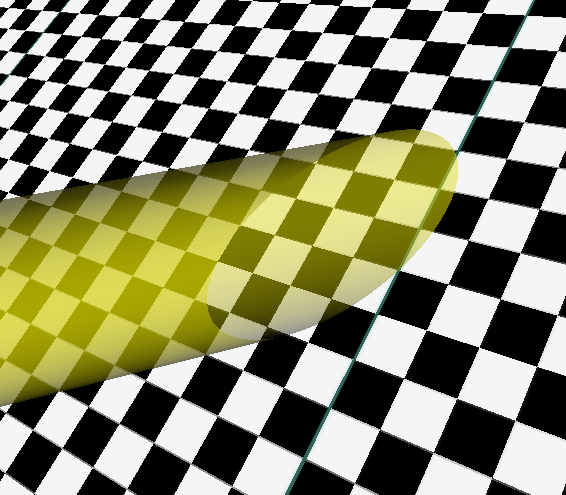

Perspective Interpolation

- Linear interpolation in world coordinates yields nonlinear interpolation in screen coordinates!

- Choose screen-space (

noperspective) or perspective (smooth) interpolation for vertex shader outputs (smoothis default)

Texturing One Triangle

- Assign 2D texture coordinate \(\vec{u}=(u,v)\) to each vertex

- Interpolate texture coordinate by barycentric coordinates in 3D object space

- Fetch color value from texture

Texturing One Triangle

perspectively incorrect

perspectively correct

Images from Akenine-Möller, “Real-Time Rendering”

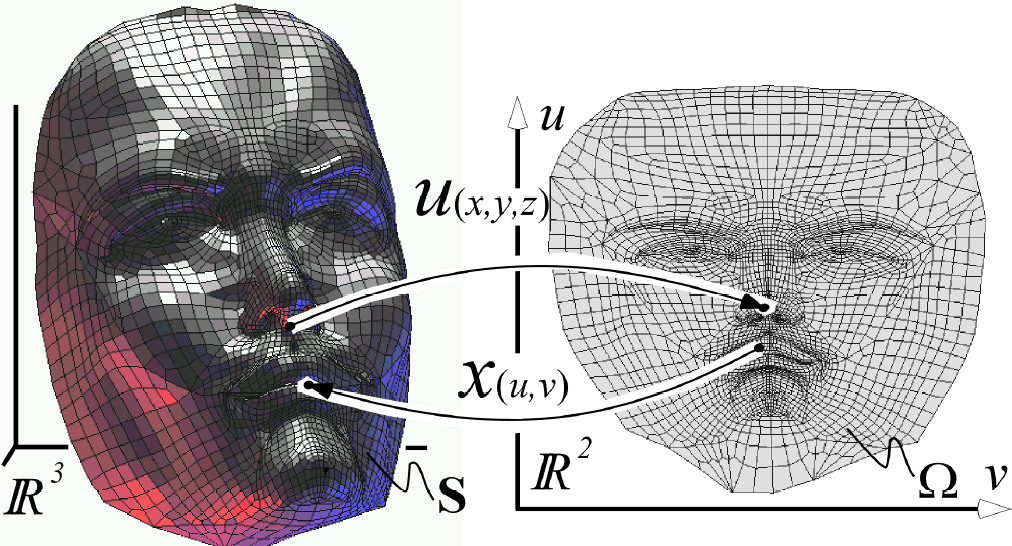

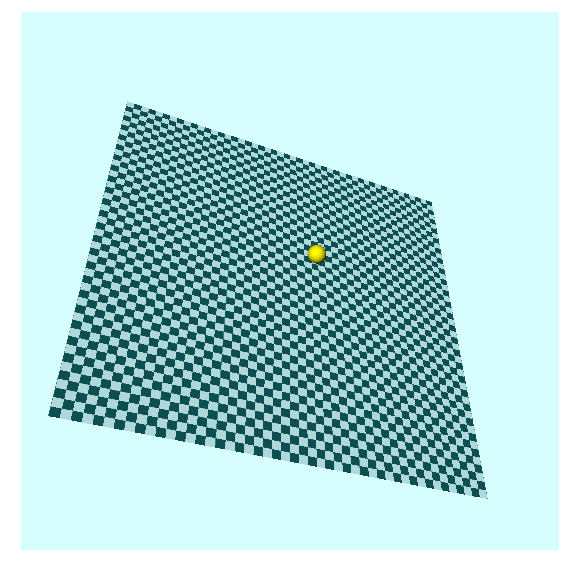

Texturing a Triangle Mesh

Texturing a Triangle Mesh

- How to find texture coordinates for each vertex?

- Find parameterization: Mapping between 2D texture space and 3D object space

- See lecture 3D Geometry Processing (Spring)

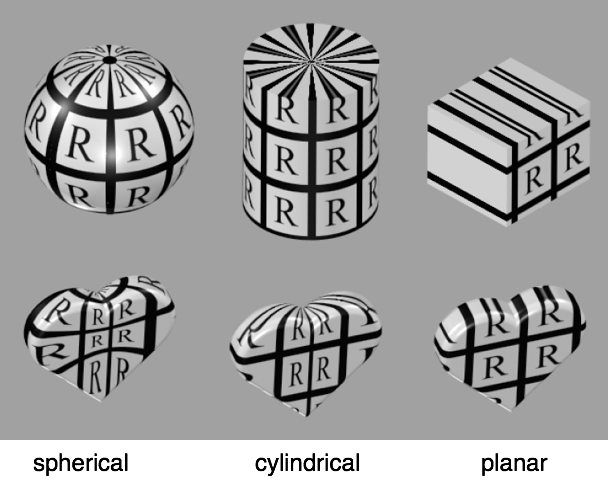

Simple Parameterizations

Images from Akenine-Möller, “Real-Time Rendering”

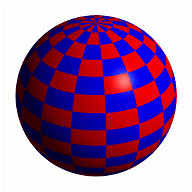

Sphere Parameterization

\[ \vector{ \phi \\ \theta } \mapsto \vector{ \cos\phi \, \cos\theta \\ \sin\phi \, \cos\theta \\ \sin\theta } \]

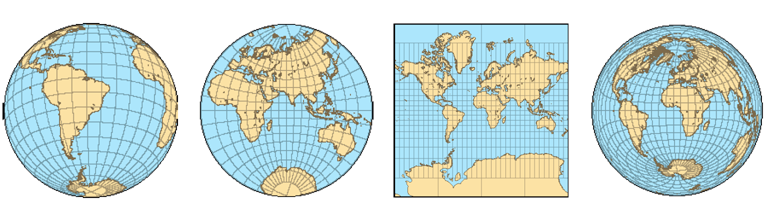

Cartography

- Many ways to parameterize a sphere!

- Some parameterizations have special properties:

- preserve angles (conformal, 2nd image)

- preserve areas (equi-areal, 4th image)

Low-Distortion Parameterization

Low-Distortion Parameterization

Low-Distortion Parameterization

Texture Filtering

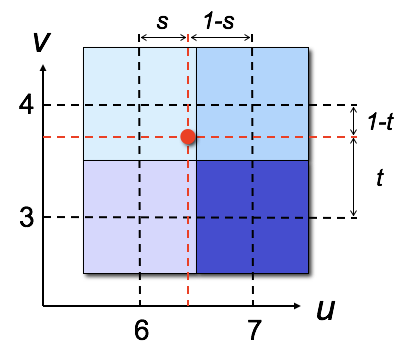

Texture Interpolation

- How to get color value from real-valued texture coordinates \((u,v)\), such as \((u,v) = (6.4, 3.7)\)?

Round to nearest integer coordinate

color = tex[6,4];

Bilinear interpolation of neighboring texture pixels

color = (1-s)*(1-t)*tex[6,3] + (1-s)*t*tex[6,4] s*(1-t)*tex[7,3] + s*t*tex[7,4];

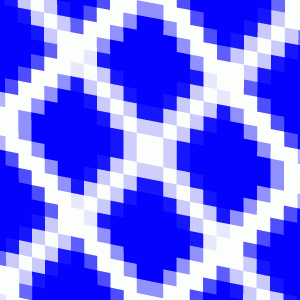

Magnification Filter

nearest

nearest  linear

linear

Magnification Filter

nearest

nearest  linear

linear

Images from Akenine-Möller, “Real-Time Rendering”

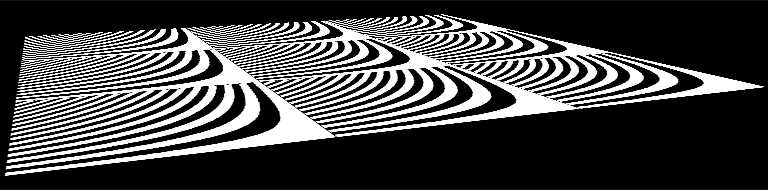

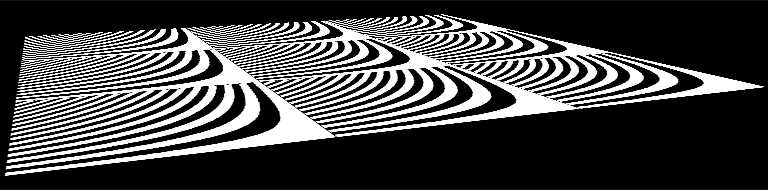

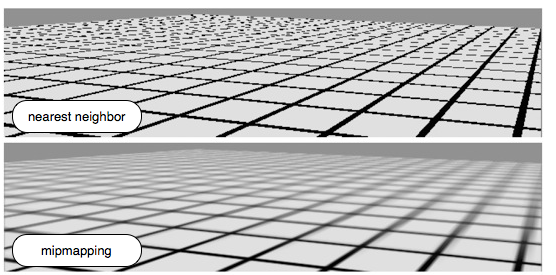

Minification Filter

Minification Filter

Minification Filter

- Point sampling is the wrong model

- Texture minification leads to aliasing

- Integrate over image pixel’s area in texture space

- Approximated by an ellipse

- Elliptically weighted averaging (EWA filtering)

Minification Filter

Minification Filter

EWA filtering

bi-linear filtering

Minification Filter

- Point sampling is the wrong model

- Texture minification leads to aliasing

- Integrate over image pixel’s area in texture space

- Approximated by an ellipse

- Elliptically weighted averaging (EWA filtering)

- Computationally too expensive

- Approximate EWA filtering by

- mip-mapping

- anisotropic texture filtering (not discussed)

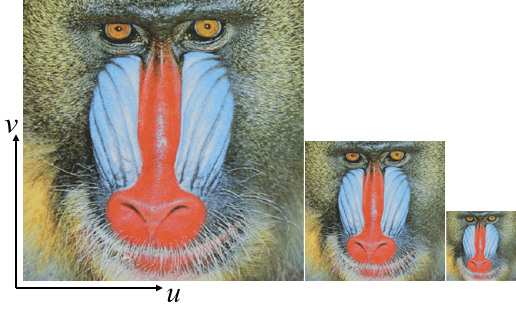

Mip-Mapping

- Store texture at multiple levels-of-detail

- MIP comes from the Latin “multum in parvo”: a multitude in a small space.

- Precompute down-scaled versions of texture image

- Use lower-resolution versions when far from camera

- OpenGL picks the most suitable image resolution for each per-pixel texture lookup based on pixel’s depth value

Try it yourself

Try it yourself

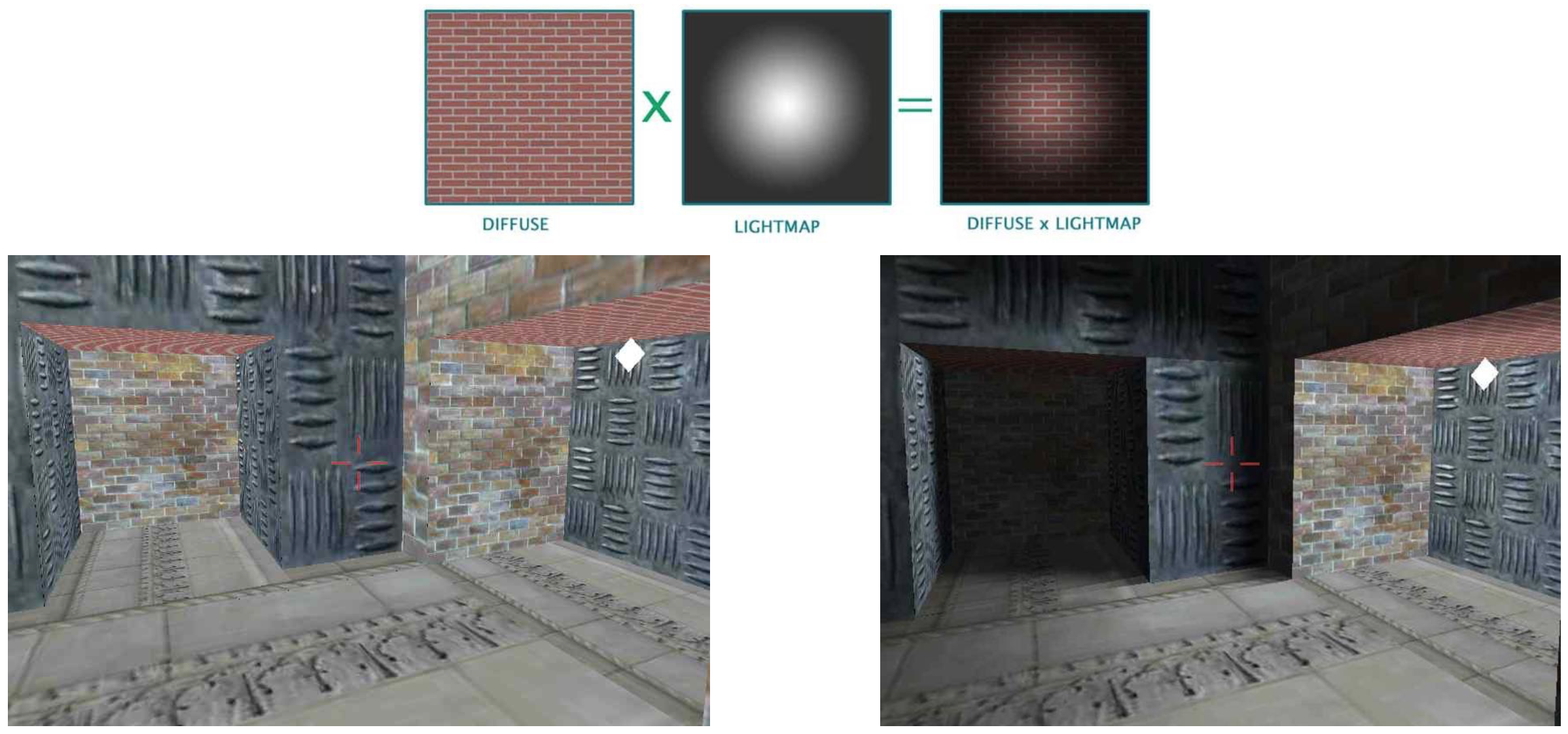

Special Texture Maps

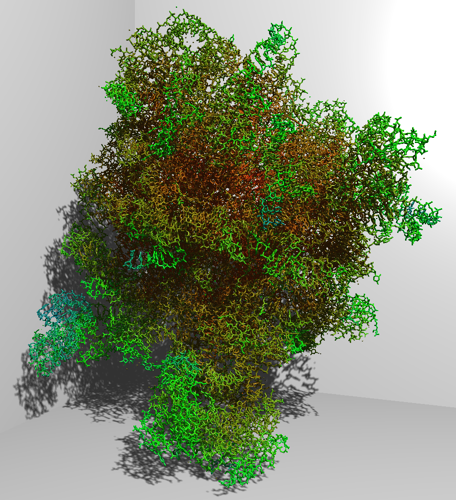

Light Maps

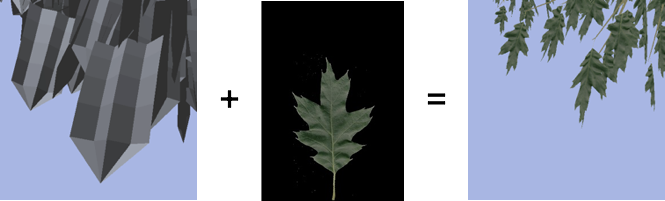

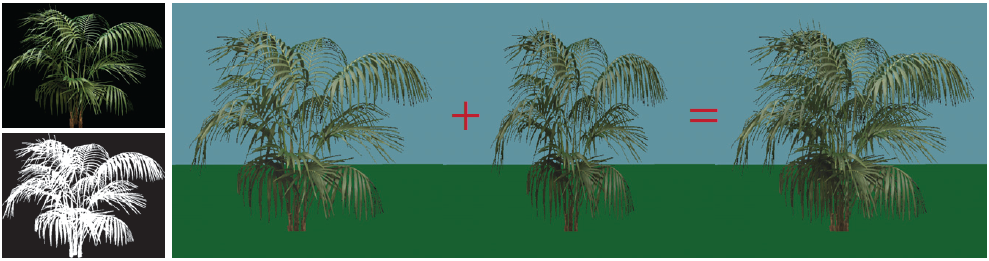

Alpha Maps

- Discard transparent texture pixels (alpha=0) in fragment shader. Frequently used for real-time rendering of plants.

Images courtesy of Oliver Deussen

Images courtesy of Oliver Deussen

Alpha Maps

- Discard transparent texture pixels (alpha=0) in fragment shader. Frequently used for real-time rendering of plants.

Image from Akenine-Möller, “Real-Time Rendering”

Alpha Maps

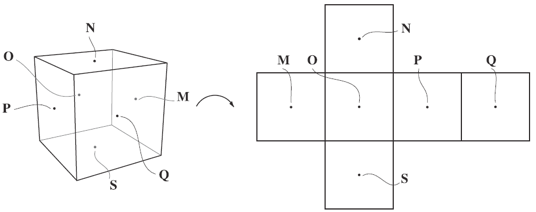

Environment Maps

- Approximate reflections of environment at surface.

- Environment is assumed to be far away from object.

Spherical Environment Maps

Cube Environment Maps

- Cube maps are the preferred representation.

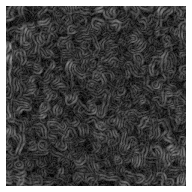

Bump Maps

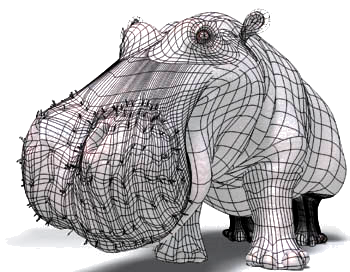

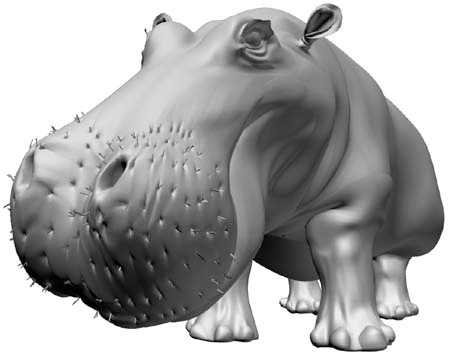

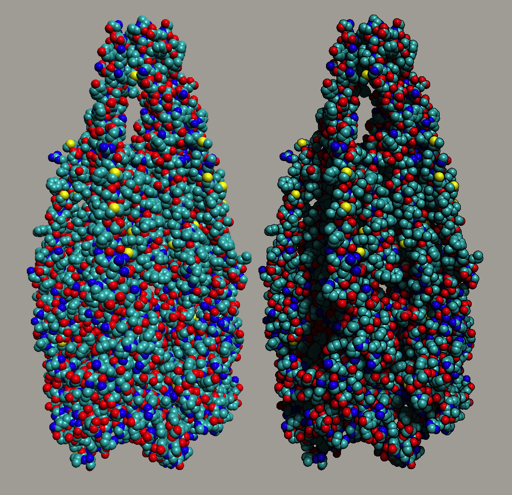

- Add surface detail without increasing geometric complexity

- Perturb surface normal before lighting

- Assume bumps are small compared to geometry

- Bump pattern is taken from a texture

normal rendering

normal rendering  bump map

bump map  bump-mapped result

bump-mapped result

Bump Maps

Coarse mesh

Coarse mesh  Diffuse map + specular map + bump map + …map

Diffuse map + specular map + bump map + …map

Image from TripleGangers

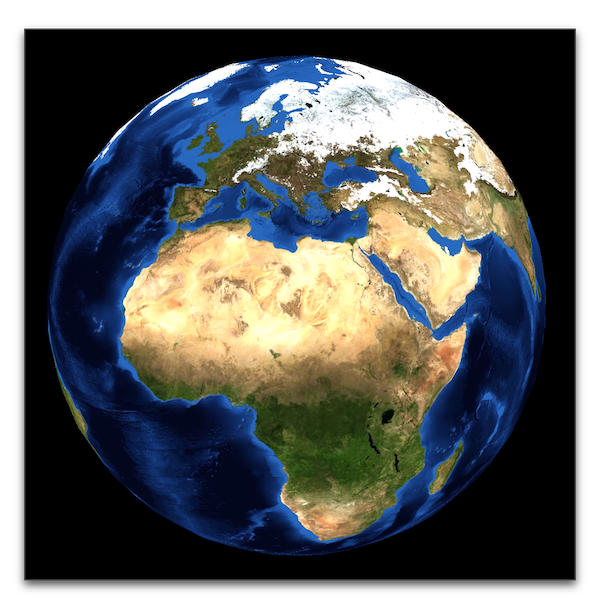

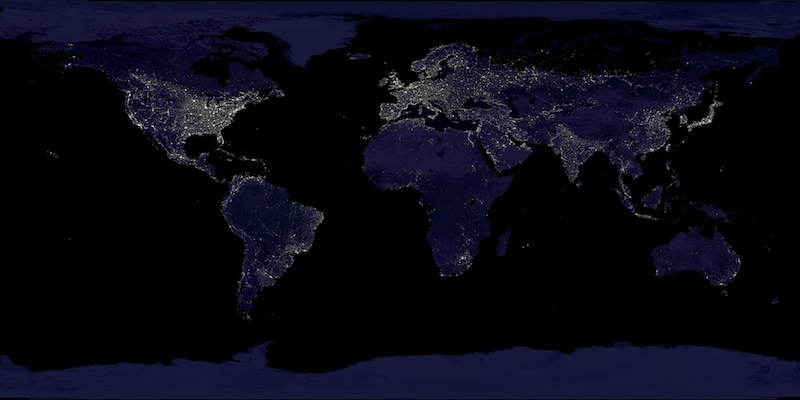

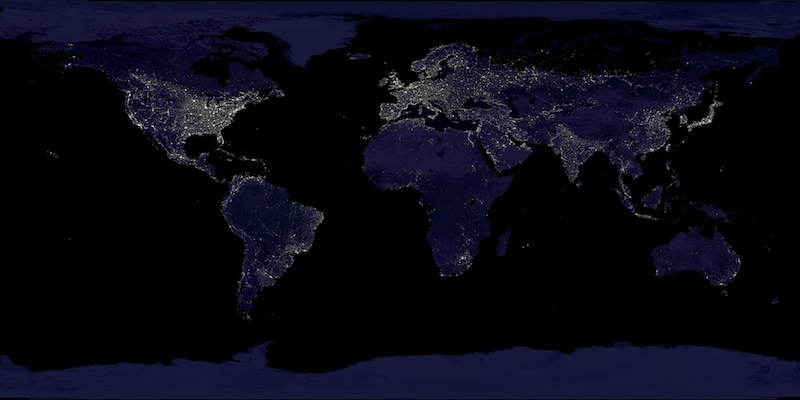

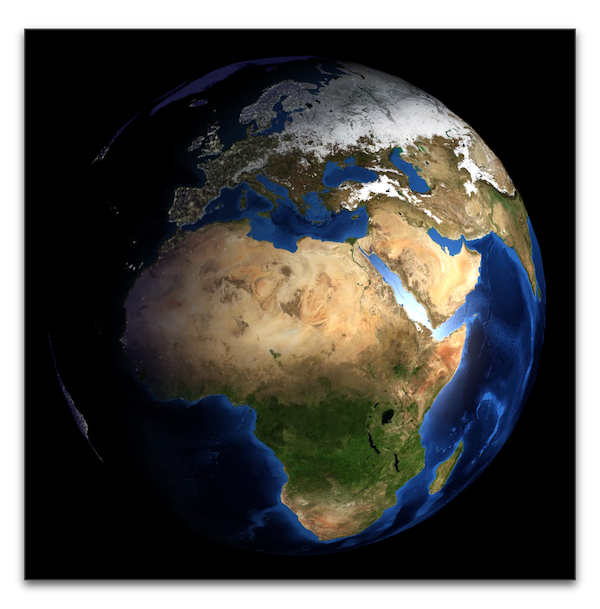

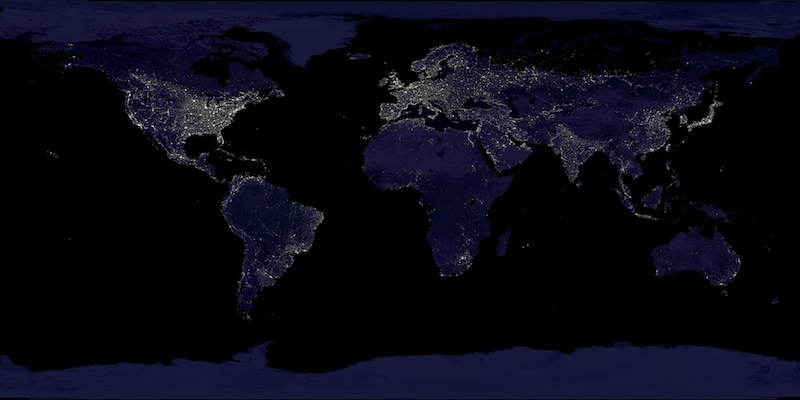

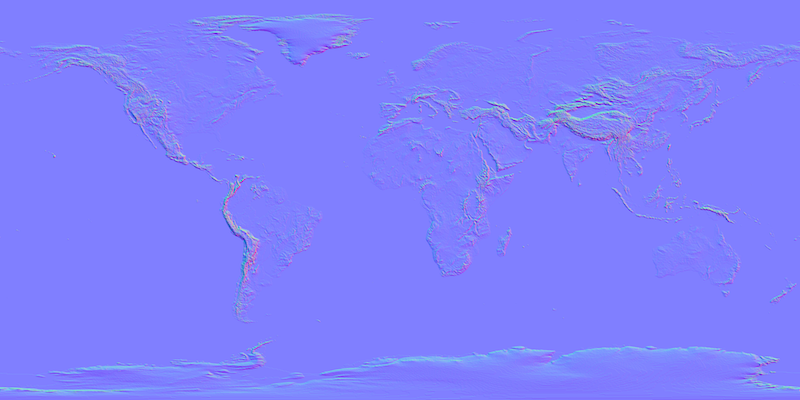

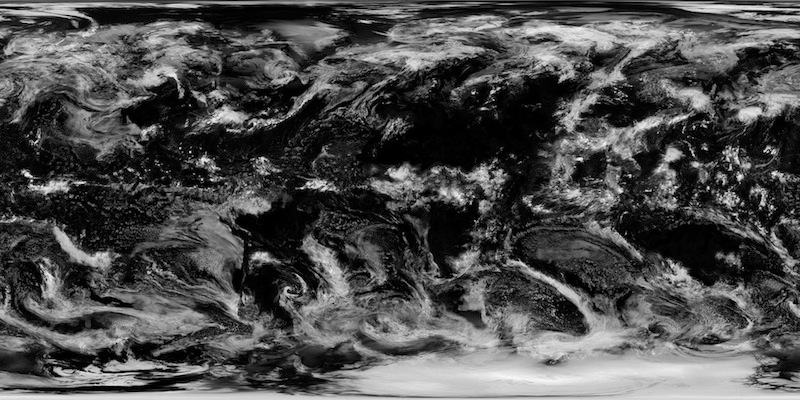

Nice Earth Textures

- NASA project Blue Marble: Next Generation

- Merged from many input images (from 2004)

- Monthly day textures, night texture, clouds, …

2D Texture

+ Diffuse and Specular Lighting

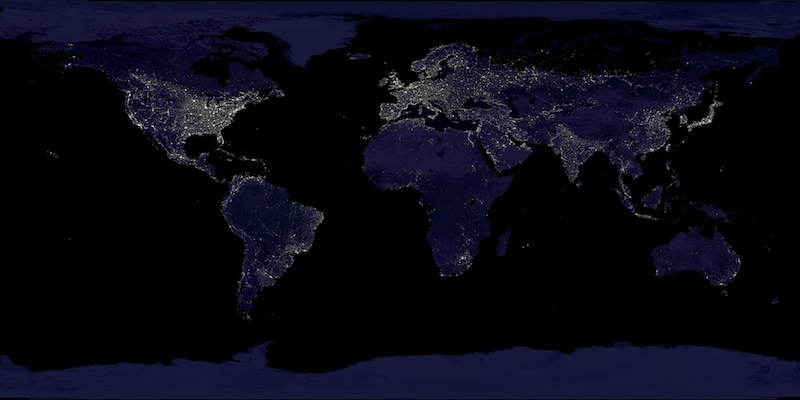

+ Night Texture

+ Specularity Map

+ Normal Map

+ Clouds

Multi-Texturing Earth Viewer

Acknowledgments

Many thanks to Hartmut Schirmacher for providing aligned textures and initial WebGL code!

Beuth Hochschule für Technik Berlin

Literature

- Akenine-Möller, Haines, Hoffman: Real-Time Rendering, Taylor & Francis, 2008.

- Chapter 6

- Shreiner, Seller, Kessenich, Licea-Kane: OpenGL Programming Guide, 8th edition, 2013.

- Chapter 6

Shadow Maps

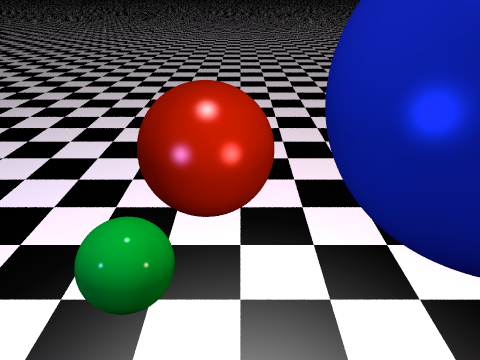

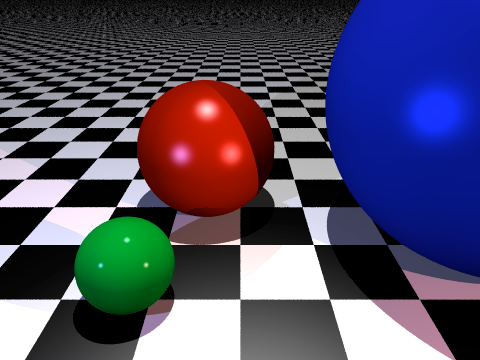

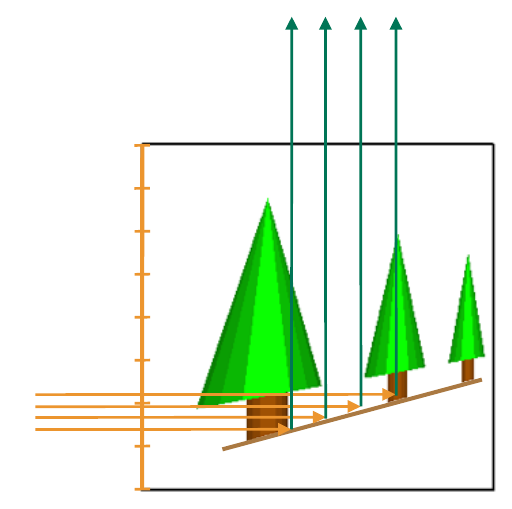

Shadows

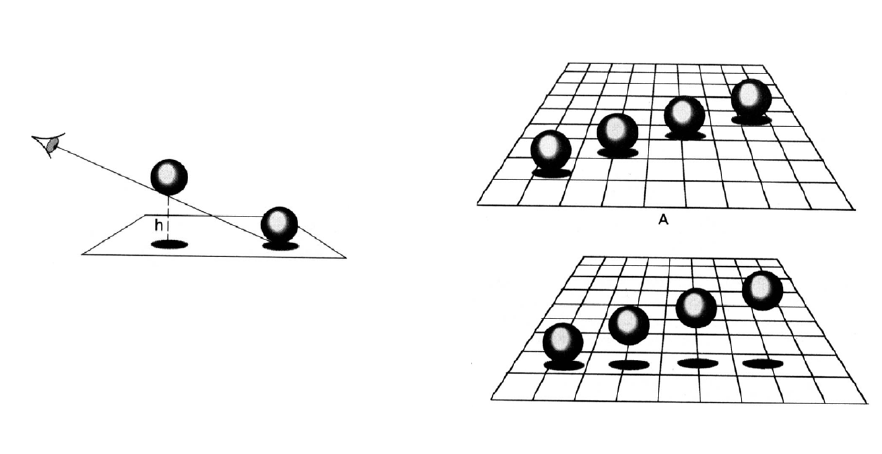

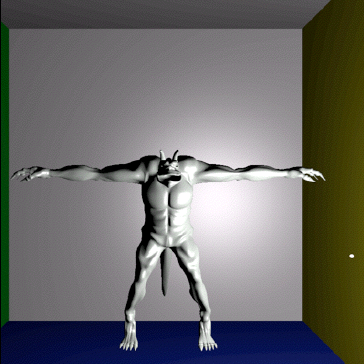

- Shadows are important for 3D depth perception

Shadows

- Shadows are important for 3D depth perception

Shadows

- Shadows are important for 3D depth perception

Shadows

- Shadows are important for 3D depth perception

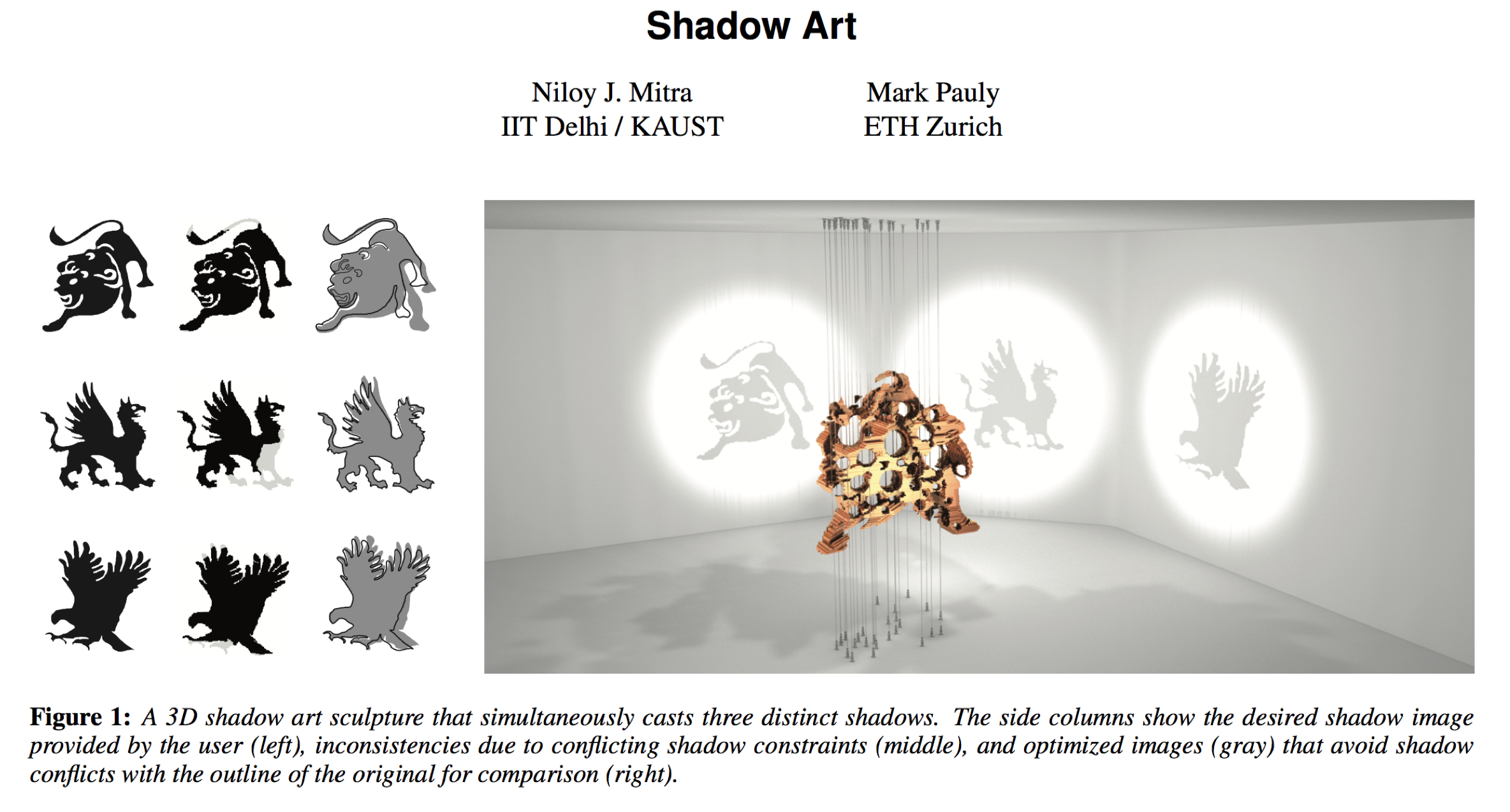

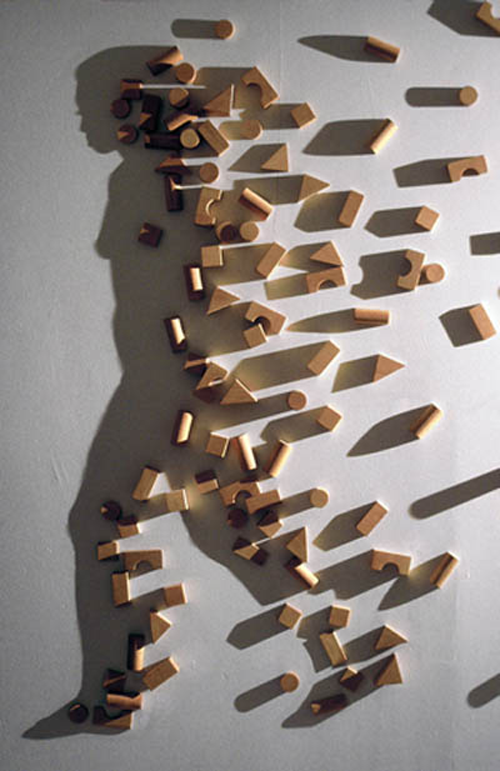

Shadow Art

Shadow Art Research

Shadow Computation

Shadow computation is very similar to visibility determination

- Visibility

- Which objects can be seen from the viewpoint?

- Shadows

- Which objects can be seen from the light source?

Lighting & Shadows

- If a light source is occluded, simply skip its diffuse and specular contribution in the Phong lighting computation

\[ \begin{eqnarray*} I(\vec{p},\vec{n},\vec{v}) &=& I_{\text{ambient}} \\[2mm] &+& \sum_{i} \text{shadow}(\vec{p},\vec{l}_i) \cdot \left( I_{\text{diffuse}}(\vec{p},\vec{n},\vec{l}_i) + I_{\text{specular}}(\vec{p},\vec{n},\vec{v},\vec{l}_i) \right) \\ \\ \text{with} && \text{shadow}(\vec{p},\vec{l}_i) \;=\; \left\{\begin{array}{ll} 1 & \vec{l}_i\text{ is visible from } \vec{p},\\ 0 & \vec{l}_i\text{ is blocked from } \vec{p} \end{array} \right. \end{eqnarray*} \]

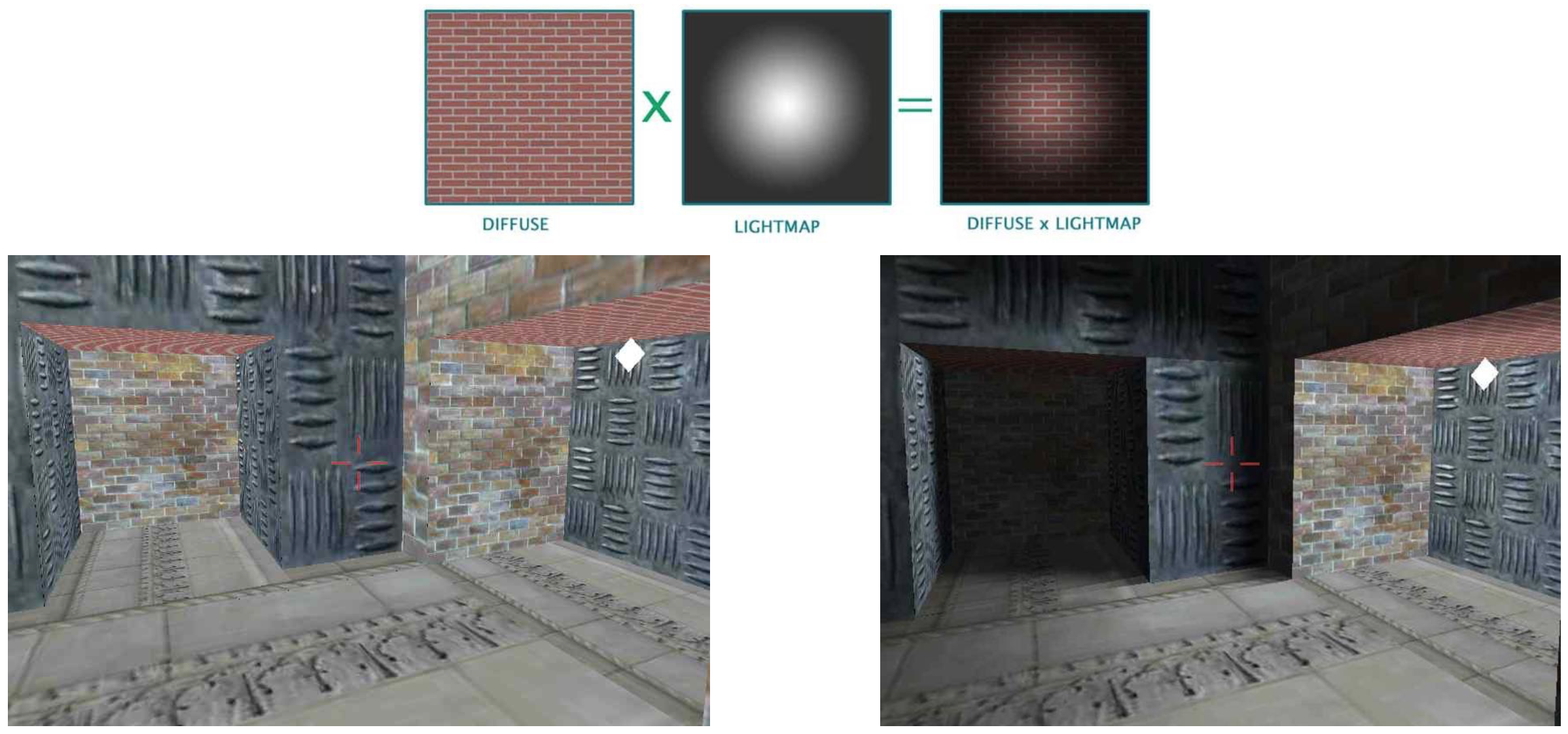

Light Maps & Shadows

- Store precomputed lighting in textures

- Precomputation can take shadows into account

- Works for static scenes only

Shadows in OpenGL

- Shadows are global effect

- Occluders block light from receiver

- Occluders can move/deform dynamically

- How to achieve global effects with local rendering pipeline?

- We have to use multiple render passes!

- Two main approaches

- Shadow volumes (object space)

- Shadow maps (image space)

Shadow Computation

- Visibility

- Which objects can be seen from the viewpoint?

- Shadows

- Which objects can be seen from the light source?

\(\Rightarrow\) Apply standard visibility techniques for shadows:

z-buffer \(\rightarrow\) shadow map

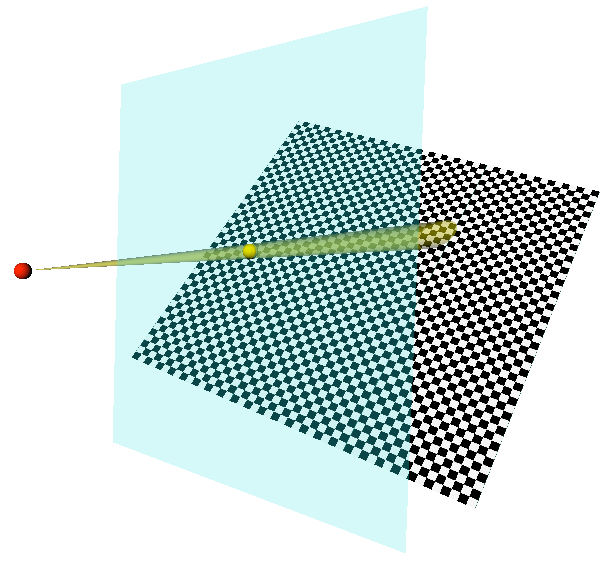

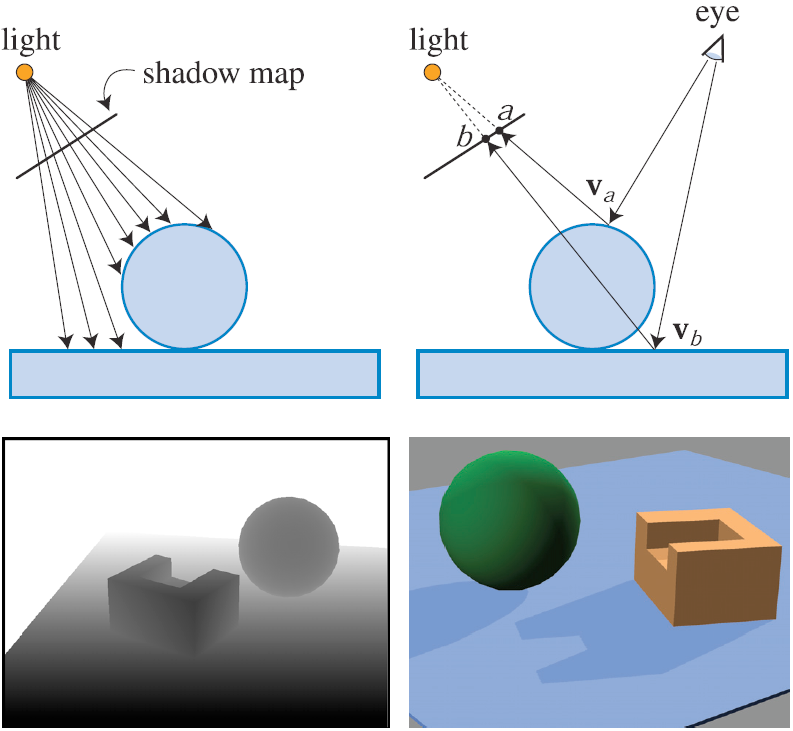

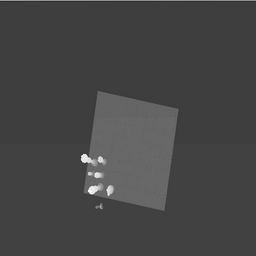

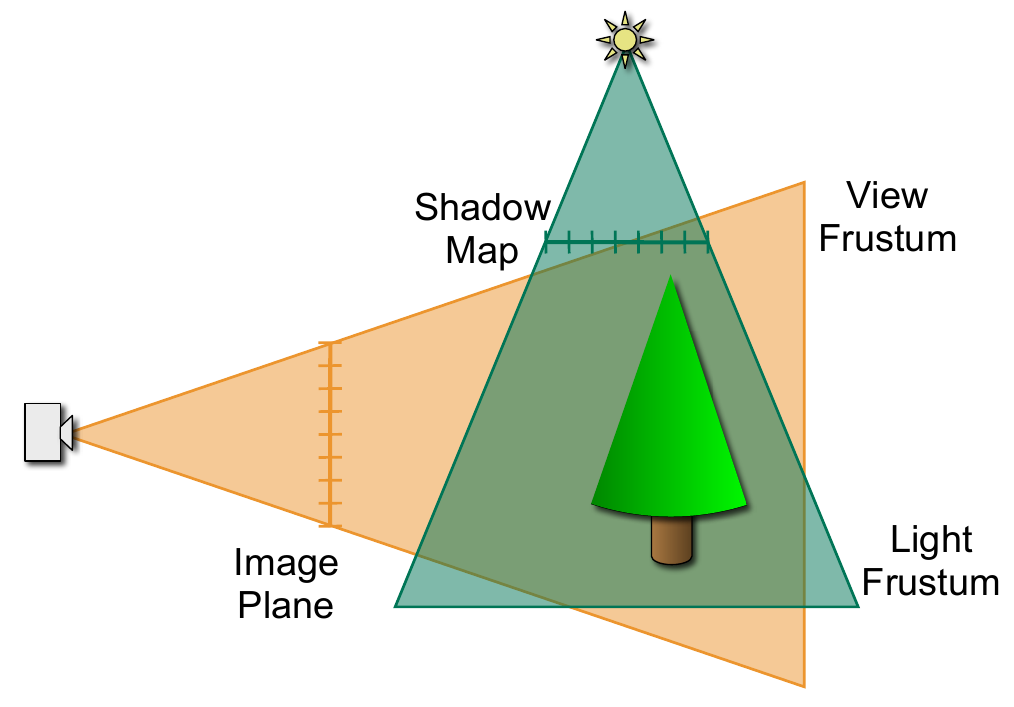

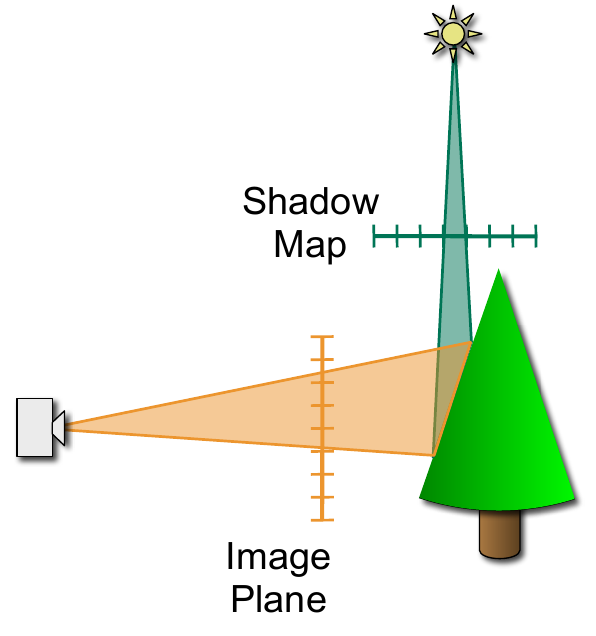

Shadow Maps

- Render scene as seen from light source

- Store z-buffer (holds distance to light)

- Light’s z-buffer is called shadow map

Shadow Maps

- Render scene as seen from light source

- Store z-buffer (holds distance to light)

- Light’s z-buffer is called shadow map

- Render scene from eye point

- Light a certain image plane pixel \((x,y)\)?

- Map it back into word coordinates: \((x', y', z')\)

- Project it into shadow map: \((x'', y'')\)

- If distance point-to-light > depth stored in map \(\Rightarrow\) Point is in shadow!

Shadow Maps

Image from Akenine-Möller, “Real-Time Rendering”

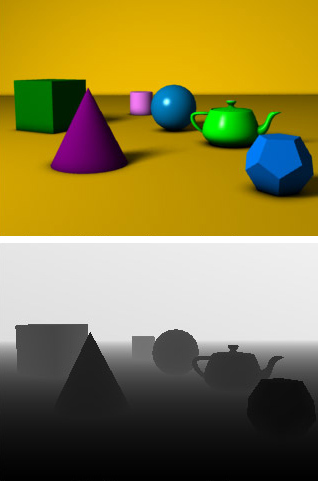

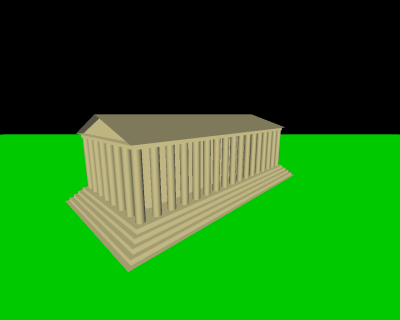

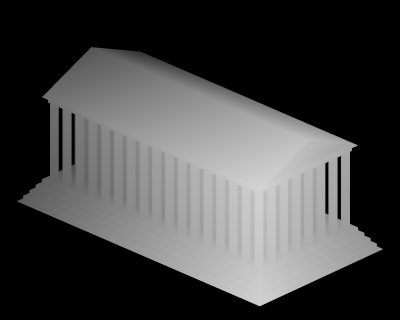

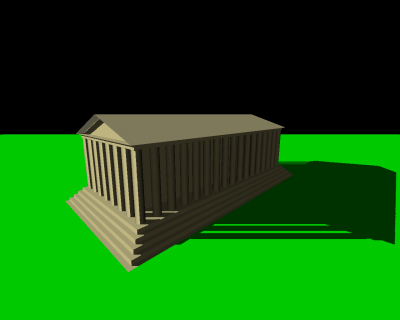

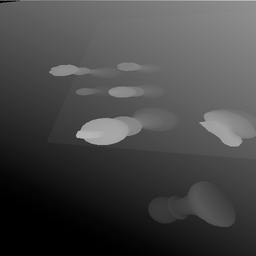

Shadow Maps

Scene without shadows

Scene without shadows  z-buffer of light’s view

z-buffer of light’s view  Scene with shadows

Scene with shadows

Seen from light’s view

Seen from light’s view ![]() Pixels in shadow

Pixels in shadow

Images from Wikipedia

OpenGL Implementation

- Render scene without light contribution

- For each light source

- Render scene from light souce

- Store z-buffer in shadow map

- Render scene with light contribution (accumulate)

- Shadow map look-up for each pixel

- Pixels in shadow are discarded

- Other pixels are lit and rendered

- Render scene from light souce

Let’s Try!

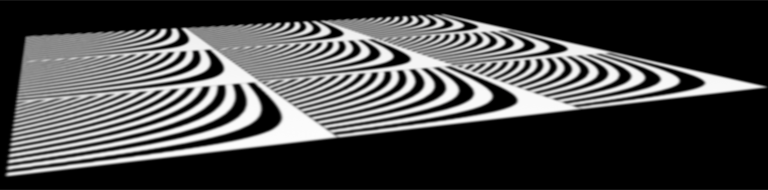

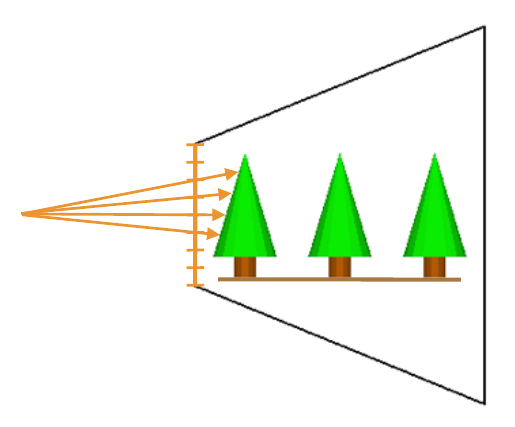

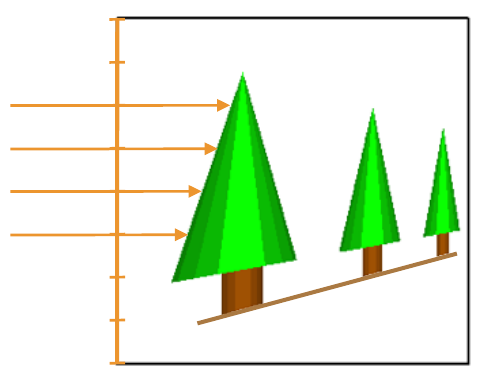

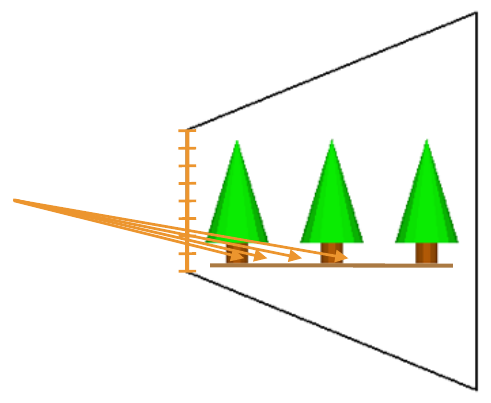

Shadow Map Aliasing

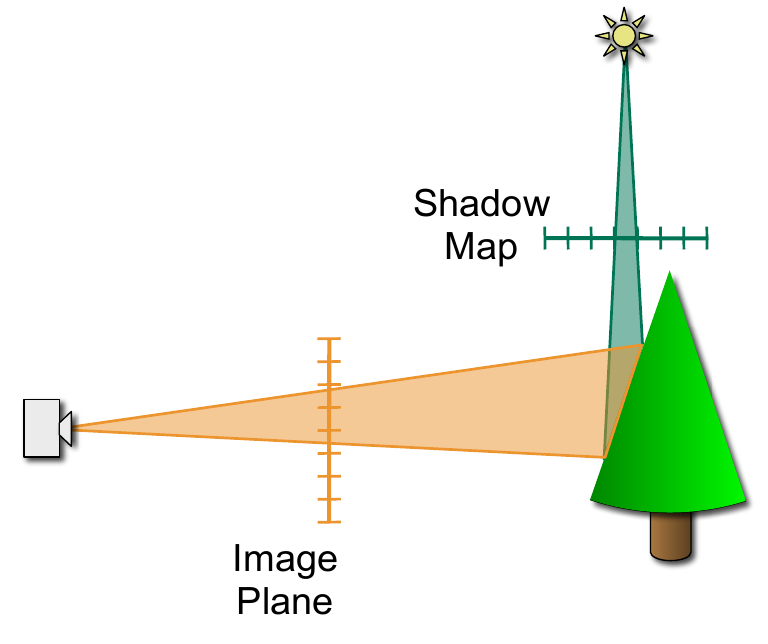

Perspective Shadow Maps

Projection Aliasing

Perspective Aliasing

Perspective Shadow Maps

- Avoid the perspective aliasing!

- Acquire shadow map in post-perspective space

- Normalized Device Coordinates

- Parallel viewing rays

- Optimal configurations

- Directional light parallel to image plane

- Point light in the camera plane

Perspective Shadow Maps

Perspective Shadow Maps

Perspective Shadow Maps

Soft Shadows?

Soft Shadows

- Don’t filter shadow map pixels!

- Corresponds to shadow map test with filtered version of the geometry

- Filter boolean shadow map results

- Sample in current pixels’s vicinity

- How many shadow map tests yield light/shadow?

- Percentage closer filtering

Shadow Maps Summary

- Works for everything that can be rasterized

- Self-shadowing, alpha-textured objects

- Soft shadows by percentage closer filtering

- Fixed resolution shadow map

- Aliasing issues

- Resolved by perspective shadow maps

- Omni-direction lights?

- Needs “shadow cube maps”

Literature

- Hughes et al.: Computer Graphics: Principles and Practice, 3rd Edition, Addison-Wesley, 2014.

- Chapters 5.6, 15

- Shreiner et al: OpenGL Programming Guide, 8th edition, Addison-Wesley, 2013.

- Chapter 7

- Nvidia GPU Gems

- Chapter Perspective Shadow Maps: Care and Feeding

Literature

- McGuire et al: Fast, Practical, and Robust Shadow Volumen, Nvidia Tech Report, 2002.

- Everitt et al: Hardware Shadow Mapping, Nvidia Tech Report, 2005.

- Stamminer & Drettakis: Perspective Shadow Maps, Siggraph 2002.